As artificial intelligence systems continue to shape digital experiences, the need for accurate and responsible content moderation has become more critical than ever. Social media platforms, e-commerce websites, news portals, and AI-powered applications constantly process enormous amounts of user-generated content every day. Among this data, harmful and misleading content poses serious risks to users, businesses, and society. From hate speech and misinformation to spam and manipulated media, harmful content can spread rapidly without effective monitoring systems in place.

While automated moderation technologies have improved significantly, AI systems still rely heavily on human expertise to recognize context, nuance, intent, and cultural sensitivity. This is where professional annotators play a central role. At Annotera, we understand that human annotators are essential to training safer and smarter AI systems capable of identifying harmful and misleading content accurately.

Understanding Harmful and Misleading Content

Harmful content refers to any digital material that can negatively affect individuals, communities, or organizations. This includes abusive language, cyberbullying, extremist propaganda, violent content, adult material, scams, and discriminatory speech. Misleading content, on the other hand, includes misinformation, fake news, manipulated media, deceptive advertising, and false claims intended to influence public opinion or user behavior.

AI moderation systems must be trained to identify these categories with precision. However, harmful content is rarely straightforward. The same phrase may be offensive in one context but harmless in another. Sarcasm, coded language, memes, and regional slang further complicate detection. Human annotators help bridge this gap by providing contextual understanding during the training process.

Why Human Annotators Are Essential

AI models learn from labeled datasets. Annotators review text, images, videos, and audio files and assign labels based on predefined guidelines. These annotations teach machine learning systems how to classify content correctly.

A professional data annotation company like Annotera ensures that datasets are accurately labeled to improve the effectiveness of AI moderation models. Human annotators bring several advantages that automated systems alone cannot achieve.

Contextual Interpretation

One of the biggest challenges in moderation is understanding context. A sentence may appear aggressive but actually be part of a joke or satire. Similarly, misinformation may be presented subtly without explicit false statements.

Human annotators analyze the surrounding context, tone, intent, and cultural references before assigning labels. Their ability to interpret nuanced communication significantly improves AI learning accuracy.

Detecting Evolving Harmful Trends

Harmful content evolves continuously. Users often create new slang, coded terms, or visual manipulations to bypass moderation filters. AI systems trained on outdated datasets may fail to recognize these emerging patterns.

Annotators continuously update datasets by identifying new forms of harmful behavior. Through ongoing monitoring and annotation, they help AI systems adapt to changing online threats more effectively.

Reducing False Positives and False Negatives

Incorrect moderation decisions can damage user trust. False positives occur when harmless content is wrongly flagged, while false negatives happen when harmful material is missed.

Skilled annotators help minimize these errors by carefully reviewing ambiguous content and maintaining annotation consistency. Their work directly improves the reliability of moderation systems across platforms.

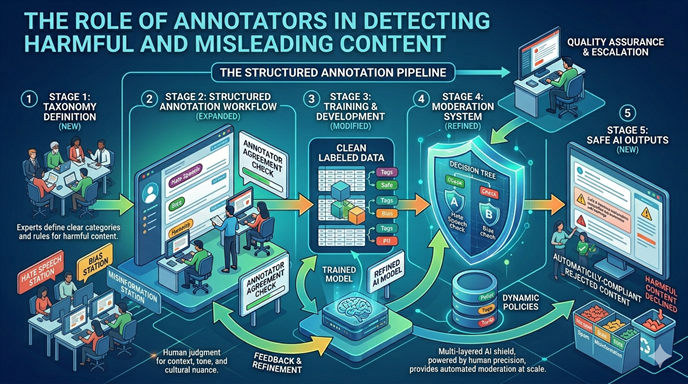

The Annotation Process in Content Moderation

Effective moderation training requires structured annotation workflows. A trusted text annotation company follows detailed processes to ensure quality and scalability.

Data Collection and Categorization

The process begins with collecting diverse datasets from multiple sources. Content is then categorized into moderation classes such as hate speech, misinformation, harassment, self-harm, violence, or spam.

Diverse datasets are important because moderation systems must function across different demographics, languages, and communication styles.

Annotation Guidelines

Clear annotation guidelines are critical for consistency. Annotators receive instructions defining each content category, severity level, and escalation protocol.

For example, annotators may distinguish between:

- Mild offensive language

- Targeted harassment

- Threatening behavior

- Coordinated misinformation campaigns

Well-defined rules help ensure that all annotators apply labels consistently across datasets.

Multi-Layer Quality Assurance

High-quality moderation datasets require extensive validation. Most professional annotation workflows include multiple review layers where senior annotators or quality analysts verify labeled data.

A reliable data annotation outsourcing provider typically uses:

- Consensus-based labeling

- Random quality audits

- Reviewer escalation systems

- Continuous annotator training

These measures help maintain dataset accuracy and improve model performance over time.

The Growing Importance of Text Annotation

Text remains one of the most common forms of online communication. Social media posts, chat messages, comments, product reviews, and forums generate massive volumes of textual data daily. As a result, text annotation has become a foundational component of AI moderation systems.

A professional text annotation outsourcing partner helps organizations process large-scale datasets efficiently while maintaining accuracy standards.

Sentiment and Toxicity Detection

Annotators label text data based on emotional tone and toxicity levels. AI systems then learn to identify:

- Hate speech

- Threats

- Bullying

- Manipulation

- Harassment

- Political misinformation

This enables platforms to automatically flag potentially harmful interactions before they escalate.

Intent Recognition

Intent detection is another critical area where annotators contribute significantly. Some harmful messages may appear harmless at first glance but carry hidden malicious intent.

For example:

- Coordinated disinformation campaigns

- Fraud attempts

- Grooming behavior

- Radicalization messaging

Human expertise is essential for accurately identifying these subtle patterns.

Challenges Faced by Annotators

Content moderation annotation is highly demanding work. Annotators often review disturbing, violent, or emotionally stressful content for extended periods. Maintaining both accuracy and mental well-being can be challenging.

At Annotera, we recognize the importance of ethical annotation practices and annotator support systems.

Emotional and Psychological Impact

Exposure to harmful content can affect mental health. Responsible annotation organizations provide:

- Wellness programs

- Psychological support

- Rotational workloads

- Safe working environments

Supporting annotators is essential for maintaining both workforce well-being and annotation quality.

Cultural and Linguistic Complexity

Online platforms operate globally, meaning moderation systems must understand multiple languages, dialects, and cultural contexts.

Annotators with regional expertise help AI systems avoid cultural bias and improve moderation fairness. This is particularly important when reviewing humor, slang, political discussions, or culturally sensitive topics.

How Annotation Improves AI Safety

The effectiveness of AI moderation systems depends directly on training data quality. Poorly labeled datasets can lead to biased or ineffective moderation decisions.

Partnering with an experienced data annotation company helps businesses develop safer AI systems capable of handling real-world moderation challenges.

Building Responsible AI Models

Annotated moderation datasets improve:

- Accuracy

- Bias reduction

- Contextual understanding

- Risk detection

- User safety

Human-in-the-loop annotation also enables continuous refinement as harmful behaviors evolve online.

Supporting Regulatory Compliance

Governments and regulatory bodies are introducing stricter requirements for online platform safety. AI moderation systems must demonstrate accountability, transparency, and fairness.

Professional annotation teams help organizations create compliant moderation frameworks supported by high-quality labeled data.

Why Businesses Choose Annotation Outsourcing

Many organizations choose data annotation outsourcing to scale moderation projects efficiently without compromising quality. Outsourcing provides access to trained annotation specialists, advanced workflows, and multilingual expertise.

A trusted text annotation company can support:

- Large-scale moderation projects

- Multilingual annotation

- 24/7 data processing

- Rapid dataset expansion

- Custom moderation taxonomies

This allows businesses to accelerate AI model development while maintaining operational flexibility.

Conclusion

As digital platforms continue to grow, harmful and misleading content remains one of the biggest challenges facing AI-driven ecosystems. Although automation plays an important role in moderation, human annotators remain the foundation of effective and responsible AI safety systems.

Their ability to interpret context, detect emerging threats, reduce moderation errors, and improve dataset quality is essential for building trustworthy AI models. Organizations that invest in professional annotation services gain stronger moderation accuracy, improved compliance, and safer user experiences.

At Annotera, we combine human expertise, scalable workflows, and advanced quality assurance processes to help businesses develop intelligent moderation systems capable of detecting harmful and misleading content with greater precision. As the demand for responsible AI grows, the role of skilled annotators will continue to be indispensable in shaping safer digital environments.